5 Ways AI Makes Art More Accessible to Everyone

5 Ways AI Makes Art More Accessible to Everyone

AI is transforming how people experience and access art, breaking down barriers for individuals with disabilities, language differences, and sensory needs. Here's a quick look at how AI is reshaping art accessibility:

- Visual Recognition for Blind Visitors: Tools like Aira provide detailed verbal descriptions of artworks, offering independence to blind and low-vision visitors.

- Personalized Museum Tours: Platforms like Museumfy create custom tours tailored to visitors' preferences, needs, and time constraints.

- Real-Time Translation: AI systems enable instant translations of art descriptions and tours in over 30 languages, making museums more visitor-friendly for global audiences.

- Interactive Tactile Experiences: AI-powered installations, such as 3D-printed models and haptic gloves, allow visitors to "touch" and explore art in new, multi-sensory ways.

- Support for Neurodivergent Visitors: AI tools provide sensory-friendly spaces, visual schedules, and personalized storytelling to make art accessible for neurodivergent individuals.

AI is helping museums and galleries create more inclusive and engaging experiences for everyone, regardless of physical, sensory, or cognitive abilities.

1. Visual Recognition Tools for Blind and Low-Vision Visitors

AI-driven visual recognition tools are changing how blind and low-vision visitors experience art. By combining artificial intelligence with human input, these tools provide detailed, descriptive interactions with artworks. Institutions like the Smithsonian have already embraced this technology.

The Smithsonian museums in Washington, D.C., along with the National Zoo, use Aira technology. This system employs smartphone cameras or specialized glasses to deliver verbal descriptions of artworks.

"Previously reliant on companions, visitors with vision loss now gain instant, independent access at the touch of a button. In the words of one recent user, 'This revolutionizes the way people with vision loss experience museums.'" – Beth Ziebarth, director of Access Smithsonian

These tools offer two main ways for users to explore artworks:

- Attribute-based exploration: Provides specific feedback about individual elements when touched.

- Hierarchical exploration: Starts with general descriptions and allows users to delve into finer details.

The Smithsonian has also developed an open-source assistive tech suite, led by Ian McDermott. This includes a handheld device that pairs tactile exploration of artwork with audio descriptions. It uses specialized vector and raster files to make this possible.

In Prague, the National Gallery’s "Touching Masterpieces" exhibition introduced haptic glove technology. This innovation turns sculptures into virtual objects that visitors can "touch" digitally. One participant shared their perspective:

"Art is not always explainable just by words. The element of touching it and feeling it is missing…reading about it is not the full experience. It shouldn't be."

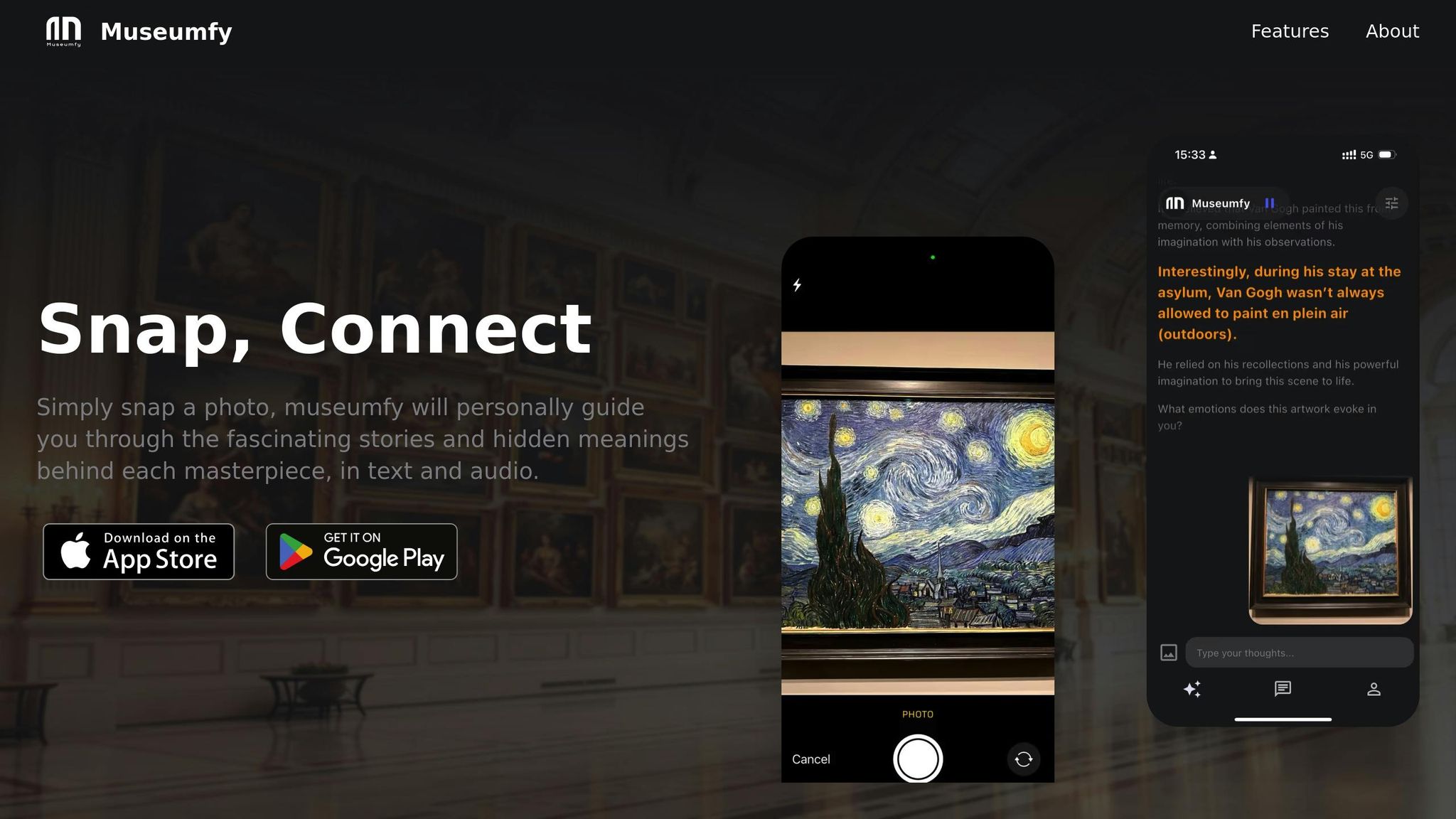

2. Museumfy: Smart Digital Museum Tours

Museumfy uses AI to transform how people experience museums. This platform creates tailored digital tours that cater to each visitor's preferences and needs, going beyond the limitations of traditional audio guides. By considering various factors, Museumfy ensures a more engaging and personalized interaction with art.

When visitors use the Museumfy app, they provide three key details:

- Preferred language, including options like American Sign Language or audio descriptions

- Topics or artworks of interest

- Available time

With this input, the app generates a custom tour route, helping visitors make the most of their time while focusing on the pieces they care about most. This approach highlights how AI can make art more accessible to everyone.

During a trial at the Smithsonian American Art Museum in January 2024, personalized tours showed higher completion rates compared to standard linear audio guides.

"We use these inputs to come up with a trail that you can reasonably squeeze into the timeframe allocated and that appeals to your individual tastes and preferences, based on the collections available." - Thanos Kokkiniotis, Museumfy CEO and Head of Product

One notable example at the Smithsonian was a visitor with hearing impairments who enjoyed a customized American Sign Language tour.

"Personalized tours achieve far higher completion and net promoter scores, with social media feedback confirming their positive impact." - Thanos Kokkiniotis

3. Real-Time Translation for Art Descriptions

AI is breaking down language barriers, making art accessible to a global audience. With 20% of the U.S. population speaking a language other than English at home, the need for multilingual options in museums is clear.

AI-powered systems now handle over 8,000 words daily, far surpassing traditional translation methods. This allows museums to provide instant translations for artwork descriptions, historical details, and guided tours, catering to international visitors effortlessly.

Research shows that 75% of people are more engaged when content is presented in their native language. This has led major institutions, like The Louvre and The British Museum, to adopt AI translation tools. These tools, available through mobile apps or smart glasses, offer real-time translations in over 30 languages.

Cost efficiency is another benefit. Museums using AI translation report savings of 20% to 30% compared to traditional methods. These savings often enable them to offer translations in more languages, reaching even larger audiences.

Interactive installations showcase the true power of this technology. For instance, The Metropolitan Museum of Art uses translation kiosks that deliver instant, accurate translations while retaining the cultural nuances of the artwork. This blend of speed and accuracy ensures that art can be appreciated by everyone, regardless of language.

sbb-itb-e44833b

4. AI Art Experiences You Can Touch

Interactive AI installations are transforming how people engage with art by creating multi-sensory experiences. For example, the Prado Museum's "Touching the Prado" exhibition uses 3D-printed models that allow visitors to explore art through touch. These models include touch-sensitive areas that activate localized audio, offering both detailed explanations and emotional context. This method goes beyond Braille - which fewer than 10% of visually impaired Americans can read - providing a more intuitive way to experience visual content.

Some recent developments include proxemic audio systems. Microsoft Research's Eyes-Free Art adapts sound based on how close a visitor is to the artwork. As users move around, they hear different layers of sound, from ambient music to detailed descriptions. Artists are also experimenting with these technologies. Anaisa Franco Studio’s "Love Synthesizer" creates an orchestra that responds to touch, while Studio Roosegaarde’s "TOUCH" installation turns spaces into interactive, immersive environments. These tactile innovations align seamlessly with tools designed for neurodivergent art enthusiasts, discussed in the next section.

Here’s how AI-powered tactile experiences compare to traditional art displays:

| Feature | Traditional Art Display | AI-Enhanced Tactile Experience |

|---|---|---|

| Interaction Method | Visual only | Multi-sensory (touch, sound, movement) |

| Information Access | Written descriptions | Dynamic audio feedback |

| User Experience | Passive viewing | Active exploration |

| Accessibility | Limited | Universal design approach |

Museums are also working to improve accessibility with thoughtful additions. For instance, the Dallas Museum of Art offers de-escalation spaces equipped with weighted lap blankets and noise-canceling headphones for visitors with sensory sensitivities. These efforts show how AI-driven, interactive tactile experiences are helping create more inclusive art environments.

5. Tools for Neurodivergent Art Lovers

AI is playing a growing role in making art experiences more personalized and accessible, especially for neurodivergent visitors. Museums are now using AI-driven tools to create environments that cater to diverse needs. For example, the "A Dip in the Blue" app provides visual schedules and accessibility resources specifically designed for individuals on the autism spectrum. It’s structured like an archaeological journey and even gathers data on emotional and sensory responses.

Here’s how museums are enhancing accessibility for neurodivergent visitors:

| Feature | Implementation | Benefit |

|---|---|---|

| Sensory Management | Quiet rooms with adjustable lighting | Helps prevent sensory overload |

| Navigation Support | Digital maps offering detailed venue guidance | Makes moving around easier |

| Content Adaptation | AI-powered storytelling (like in the CHESS project) | Matches individual learning styles |

| Interactive Elements | Multimedia installations that adjust to user input | Promotes multi-sensory interaction |

In addition to navigation and sensory aids, museums are introducing tactile and auditory elements to enhance the experience. For instance, the Portland Art Museum partnered with artist Michael Cantino in 2023 to create tactile graphics of select artworks, such as Thelma Johnson Streat's Monstro the Whale and Christine Miller's Watermelon Portraits. These 3D-printed pieces make it possible to experience art through touch.

"Synthaisthesia creates an audio counterpart to the visual experience of art, enhancing understanding for visually impaired visitors."

– Ian McDermott

The Neurodiversity in the Arts Symposium, held at the University of the Arts Helsinki in November 2024, showcased practical ways to make art more inclusive. Accommodations included 30-minute breaks between sessions, live CART captioning, and sensory rooms, all designed to ensure an accessible experience for attendees.

AI tools are also helping museums expand accessibility by offering features like:

- Multilingual translation services

- Audio descriptions that adjust based on visitor location

- Digital guides with customizable sensory settings

- Interactive storytelling that adapts to individual learning speeds

One standout example is the CHESS project at the Acropolis Museum in Athens. This initiative uses AI to craft personalized cultural experiences by tailoring interactive narratives to each visitor's preferences.

Conclusion

AI is reshaping how we experience museums. Tools like the Smithsonian's Aira and the Museum of Tomorrow's IRIS+ are breaking down barriers with features like real-time descriptions and sign language translation. According to a July 2021 survey, over 80% of visually impaired individuals said they would visit museums more often if accessibility improved.

These changes reflect a growing effort to make art more accessible to everyone. Angie Judge, CEO of Dexibit, puts it into perspective:

"Just as with the age of the internet and the digital revolution, AI will quickly create a world of the haves and have nots. I hope the museum sector will find itself on the right side of that equation."

Here’s how AI is making a difference:

| Impact Area | Before AI Integration | After AI Integration |

|---|---|---|

| Language Support | Limited to a few major languages | Real-time translation in over 20 languages |

| Visual Accessibility | Basic audio guides | AI-powered descriptions and virtual tours |

| Personalization | Generic experiences | Tailored content based on individual preferences |

| Interactive Learning | Static displays | Engaging, responsive exhibits |

Institutions like the Barnes Foundation, with its interactive app, and the Louvre, offering immersive VR programs, show how AI is enhancing the museum experience. The Met's collaboration with Codemantra, which converted 700+ art monographs into ADA-compliant e-books, is another step toward making art accessible to all. With features like detailed descriptions, real-time translation, and personalized tours, museums are only scratching the surface of what AI can achieve.